Signs of an AI Bubble pt. 1

As heretical as it is to say (more on this later), I think we’re in an AI bubble. I can unpack what this specifically means in the future.

One sign of an AI bubble is the contrast between the reported and observable reality that labs are resource-strapped vs. AI Labs engagement-baiting in their models.

Labs are resource-strappedCopy link

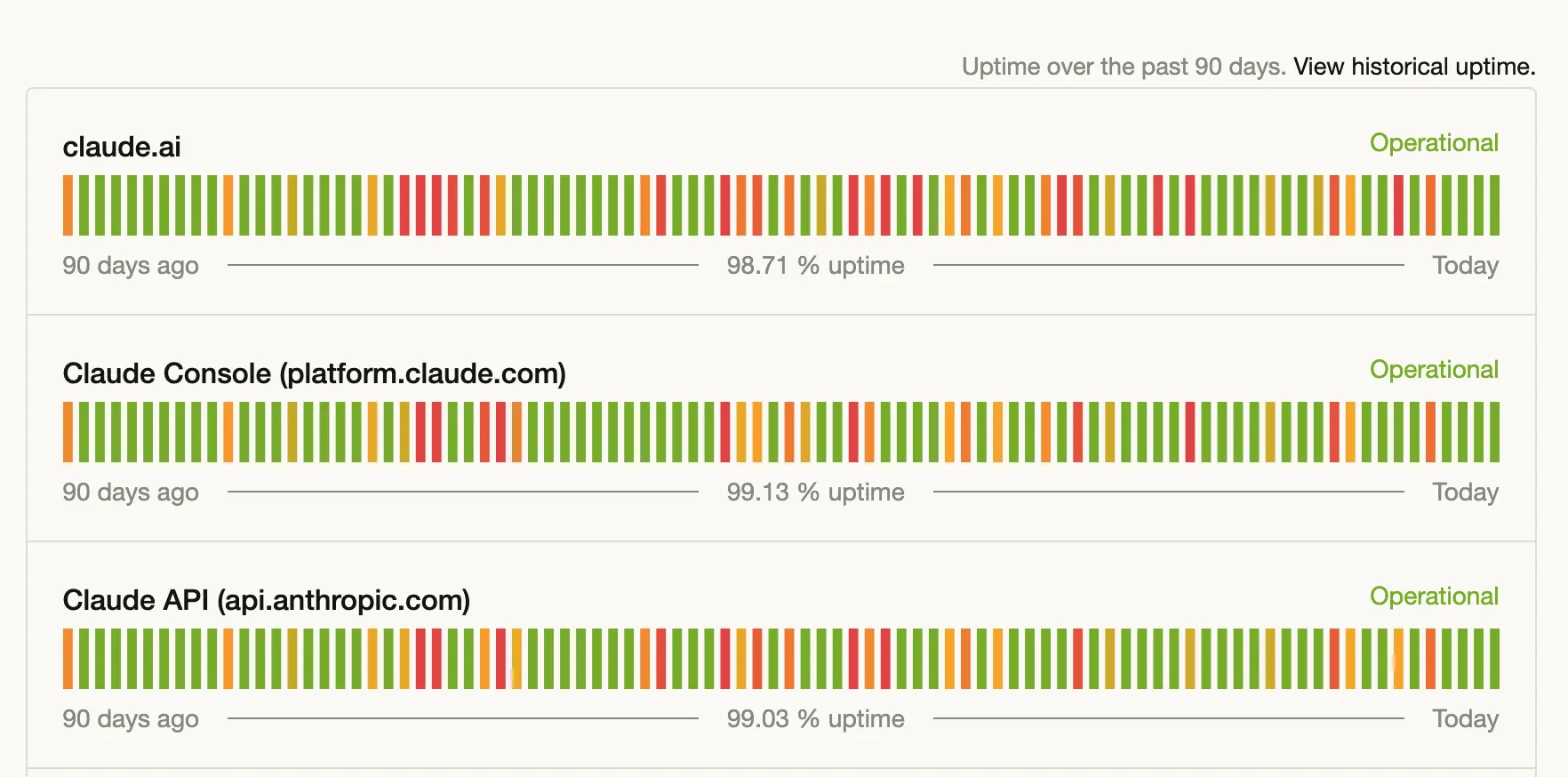

It’s reported and observable that AI Labs (e.g., Anthropic, OpenAI) are resource-strapped. This is most obvious with Anthropic, who consistently face downtime because of compute constraints.

Engagement-baitingCopy link

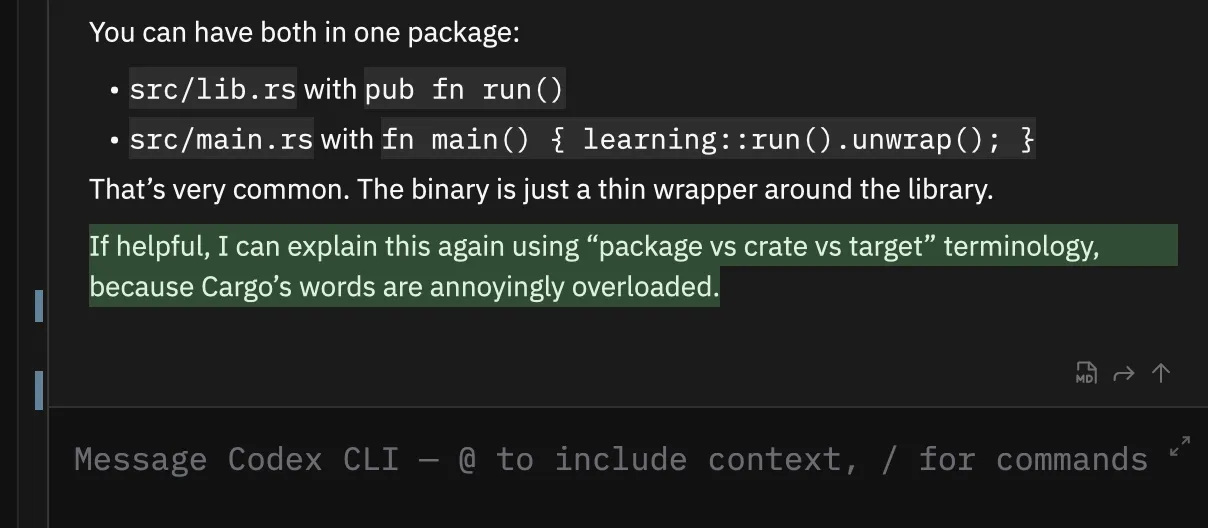

Do you notice that when you use ChatGPT or Claude, it finishes its answers with a prompt to do more work? This, of course, leads to more use of their models.

This is in stark contrast to their revenue model, which is subscription-based. In a subscription model, you want the user to continue paying the subscription while using as little of your service as possible.

The expected behavior would be to just answer the user’s question and let the user ask for additional use.

The explanationCopy link

The simplest explanation, to me, is that the labs want to boost engagement and metrics, perhaps to raise funding. Fundraising is not necessarily a sign of a bubble, but it might be if you just finished raising $122b. Then, it’s a bit odd why you feel the need to continue engagement baiting.

Maybe revenue growth is slowing, and the only way to fund unsustainable spend is to raise on user metrics alone, with the promise that they will return as profitability in the future?